There’s a clear signal coming out of the latest Gartner research: the economics of AI inference are shifting fast, and the implications for Cloud providers are immediate.

By 2030, performing inference on a large language model with one trillion parameters will cost GenAI providers over 90% less than it did in 2025. That’s a dramatic shift in unit economics, and it points to a future where LLM workloads are far more efficient to run at scale—advantage for all from small to Enterprise level companies, right?

That’s not the whole story, though: as always in the Cloud world, falling costs are only one side of the equation.

A rapid drop in cost per token

At the center of Gartner’s forecast is the token—the basic unit of data processed by GenAI models. For this analysis, a token is defined as 3.5 bytes of data, or roughly four characters.

Explainer: What is an AI token and how can they be spent?

An AI token is the smallest unit of text processed by a model, typically representing a few characters or part of a word, and all inference operations—input prompts, intermediate steps, and generated outputs—are measured in tokens.

For Cloud providers, tokens map directly to compute utilization, memory bandwidth, and accelerator time, making them a practical proxy for workload cost and performance. Consumption scales non-linearly: while simple inference requests are lightweight, multi-step agentic workflows can drive significantly higher token throughput due to iterative reasoning, tool use, and context expansion.

At scale, this creates sustained, high-frequency inference loads, where efficient scheduling, model selection, and token-level observability become critical for optimizing infrastructure efficiency and service margins. Lesson over!

The cost of processing those tokens is expected to fall sharply over the next five years. This isn’t being driven by a single breakthrough, but by multiple layers of improvement across the stack.

“These cost improvements will be driven by a combination of semiconductor and infrastructure efficiency improvements, model design innovations, higher chip utilization, increased use of inference-specialized silicon, and application of edge devices for specific use cases,” said Will Sommer, Sr. Director Analyst at Gartner.

For Cloud service providers and hosters, this will feel familiar. It reflects the same pattern seen in compute and storage: efficiency gains compound, costs fall, and workloads scale.

Gartner also notes that by 2030, LLMs will be up to 100 times more cost-efficient than the earliest models of similar size developed in 2022. That’s not incremental improvement—it’s a structural shift in how AI workloads can be delivered.

Different hardware paths, different outcomes

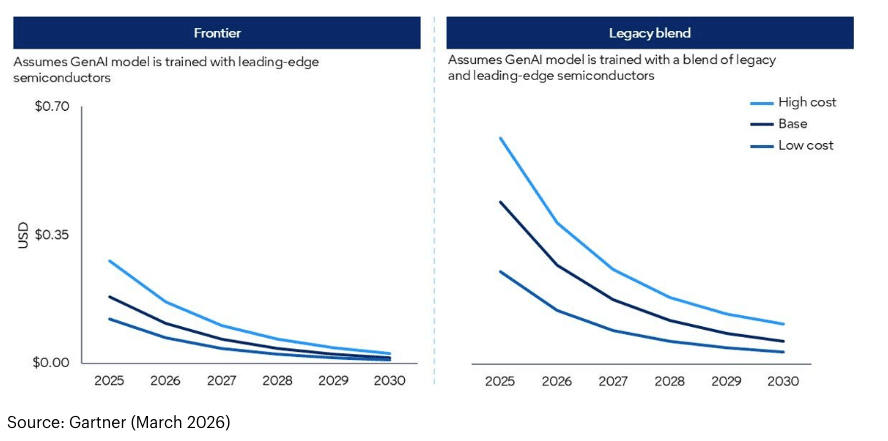

The forecast itself is split across two semiconductor scenarios, which highlight how infrastructure choices will shape cost outcomes.

In “frontier” scenarios, model processing is based on cutting-edge chips, delivering the highest levels of performance and efficiency. In contrast, “legacy blend” scenarios reflect a mix of currently available semiconductors, benchmarked against Gartner’s projections.

Costs in the blended scenarios are significantly higher due to lower computational power, reinforcing a point Cloud providers already understand: not all infrastructure is equal, and hardware strategy plays a direct role in service economics.

As AI inference scales, those differences will become more visible at the platform level.

Why lower costs won’t equal cheaper AI

It would be easy to assume that a 90% drop in inference costs means AI becomes universally cheaper. Gartner is clear that this is not the case.

First, lower token costs will not be fully passed on to enterprise customers. Pricing dynamics, value-based models, and competitive positioning will all shape how those efficiencies are exposed in the market.

Second—and more importantly—the nature of AI workloads is changing.

More advanced systems are emerging that require significantly more tokens to operate. Agentic models, in particular, can consume between five and thirty times more tokens per task than a standard GenAI chatbot. These systems don’t just respond to prompts; they execute multi-step processes and handle more complex workloads.

The result is a shift in consumption patterns. Even as the cost per token falls, total token usage rises much faster. So imagine if fuel prices drop, but you switch from a Toyota Prius to a Ford F150.

This creates a familiar Cloud dynamic: lower unit costs, but higher aggregate demand.

The limits of “cheap intelligence”

Gartner is explicit that falling token costs should not be misunderstood as a full democratization of advanced AI capabilities.

“Chief Product Officers (CPOs) should not confuse the deflation of commodity tokens with the democratization of frontier reasoning,” said Sommer. “As commoditized intelligence trends toward near-zero cost, the compute and systems needed to support advanced reasoning remain scarce. CPOs who mask architectural inefficiencies with cheap tokens today will find agentic scale elusive tomorrow.”

For Cloud providers, this distinction is important. There is a growing gap between commodity AI workloads and frontier-level reasoning, and each requires a different approach to infrastructure, orchestration, and pricing.

Efficiency alone is not enough. Architecture matters.

Where value will be created

As inference becomes more central to AI delivery, Gartner points to a clear direction for where value will accrue in the ecosystem.

Platforms that can orchestrate workloads across multiple models will have a significant advantage. Not every task needs to run on the most advanced—and most expensive—models.

Routine, high-frequency workloads should be routed to smaller, more efficient, or domain-specific models. These can often outperform general-purpose models in specialized contexts, while operating at a fraction of the cost.

At the same time, frontier-level models should be reserved for high-value, complex reasoning tasks where their capabilities justify the expense.

This is not just a technical consideration—it’s a commercial one. Matching workloads to the right model becomes a core part of delivering efficient, scalable AI services.

What this means for the Cloud ecosystem

For Cloud service providers, hosters, and domain platform operators, inference is becoming a primary driver of AI-related demand, and its economics are evolving quickly. Falling token costs will expand the addressable market, enabling more use cases and broader adoption across industries.

At the same time, increasing token consumption and more complex workloads will place greater emphasis on how services are designed and delivered.

Infrastructure efficiency remains important, but it is only part of the picture. The ability to manage workloads across different models, optimize cost-performance trade-offs, and support increasingly sophisticated applications will define competitive advantage.

This is where the next phase of differentiation begins.